Context and objectives

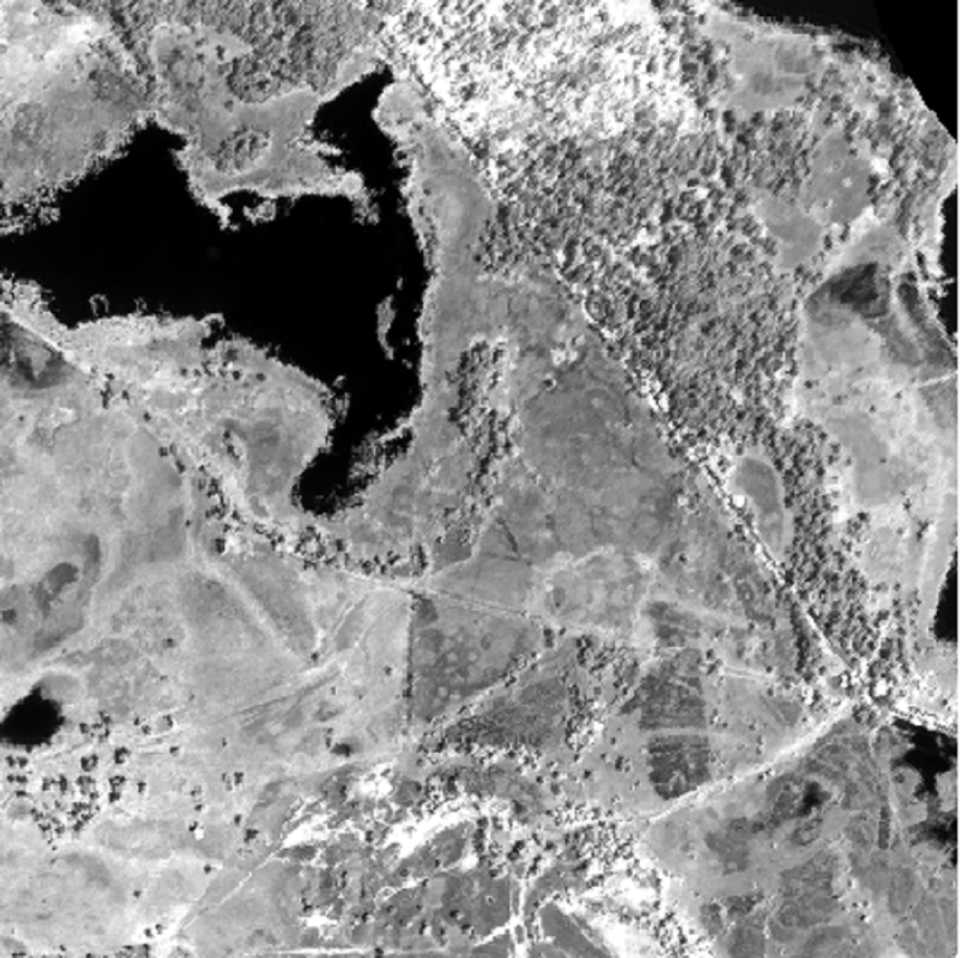

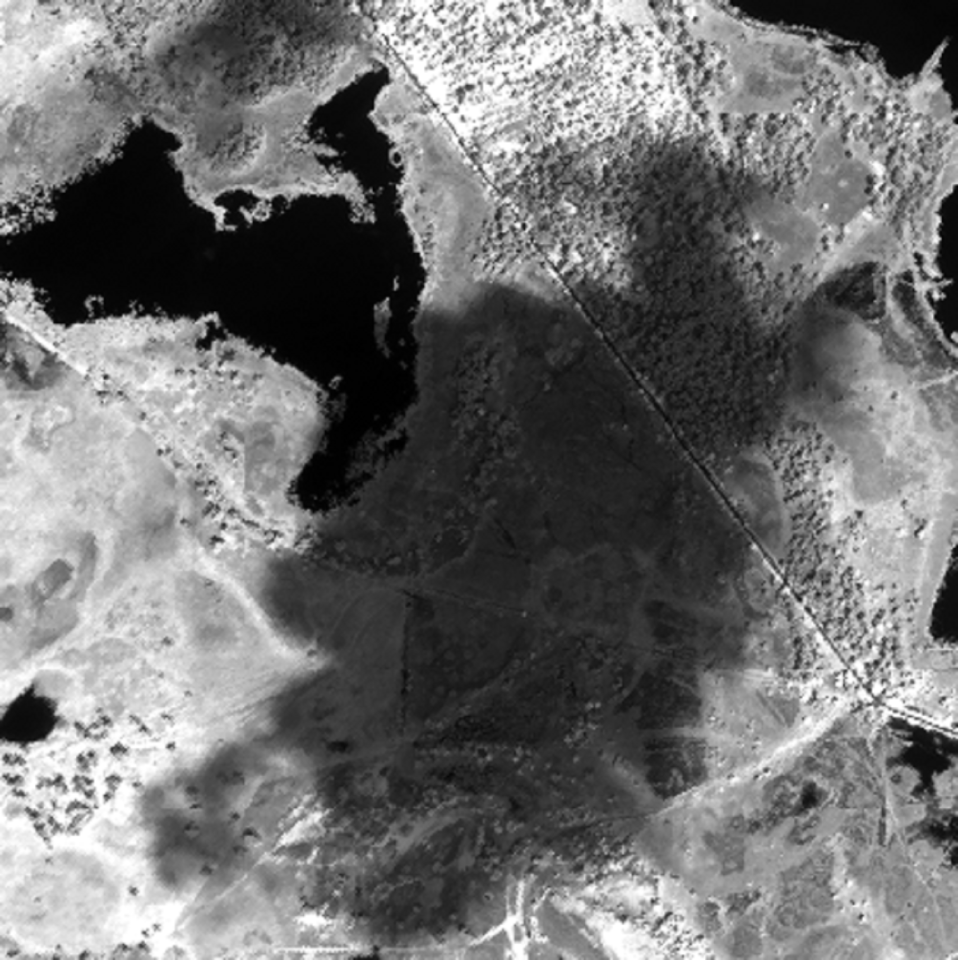

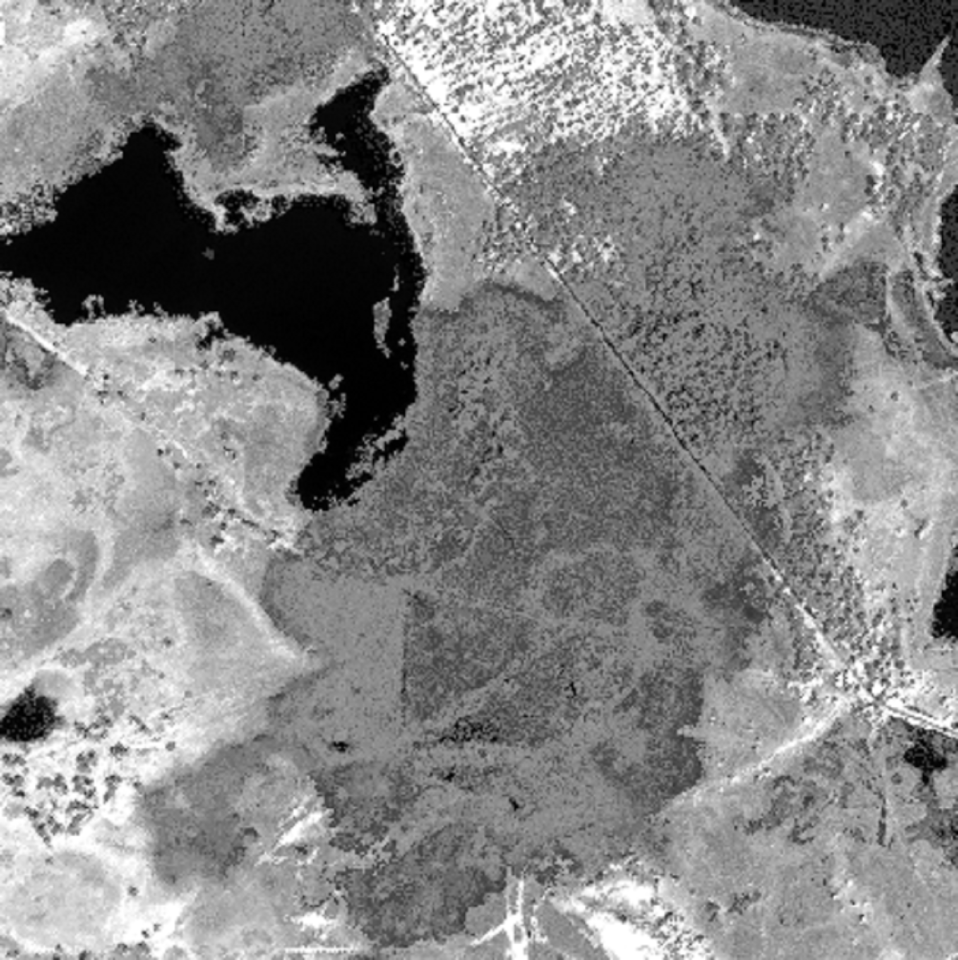

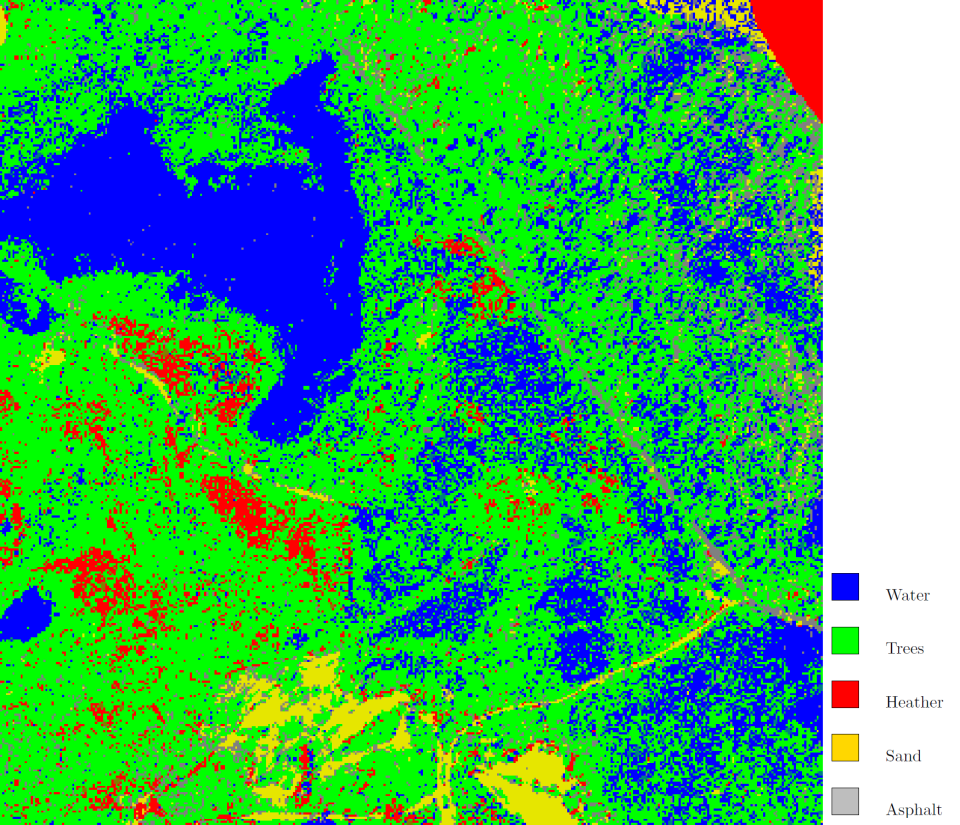

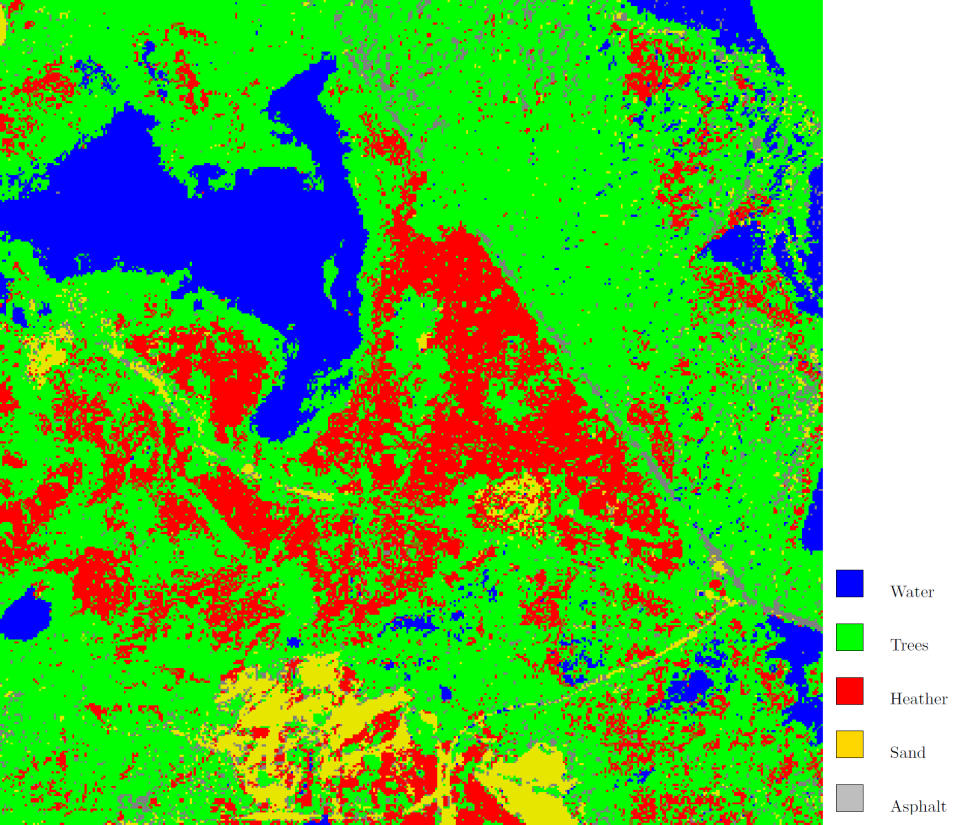

The main objective of this project is to explore and further develop the current semi-supervised and active learning techniques for the specific application of vegetation mapping. In particular, our aim is to tackle the problem of limited ground reference data by investigating the re-use of vegetation reference data. As a prototype problem, we envisage the classification of vegetation from hyperspectral images acquired at the same location on different occasions or at different locations containing similar vegetation types. The goal is then to design strategies for the re-use of reference samples obtained from one occasion or location to improve the classification at the other occasions or locations.

Project outcome

While the state-of-the-art techniques for domain adaptation are mostly focused on specific end products, such as classification maps, a new general purpose methodology was developed in this project. This methodology uses a graph representation to represent each of the images in the two domains (source and target) and adapts the source image by matching its corresponding graph to the graph of the target image.

After successfully applying the developed algorithm to a difficult setting, i.e. reusing ground reference data in a classification problem on an image with a large spectral shift with respect to the image containing the ground reference, the technique was further extended to support multiple graph representations per domain. The advantage of this approach is that other image sources that are assumed to be less affected by the spectral shift, e.g. LIDAR, can be included to facilitate the adaptation of the hyperspectral image as well.

Following the development phase, the new graph matching methodologies were applied to the real-world case of adapting multi-temporal images to each other and of correcting spectral shift between flight lines in a single campaign. While promising, a few weaknesses were registered, namely the sensitivity of the methodology to the high dimensionality of the hyperspectral imagery and the complexity of robustly assessing the performance. Research will continue to mitigate these issues.

| Project leader(s): | UA - Vision Lab - Visielab | |||

| Belgian partner(s) |

|

|||

| International partner(s) |

|

|||

| Location: |

Country:

|

|||

| Related presentations: | ||||

| Related publications: | ||||

| Website: | http://www.ua.ac.be/relearn | |||